What Is a Lead Scoring System (and Why Most Teams Build It Backwards)

Your sales team is burning time. Not because leads are scarce — because they are spending equal energy on a VP of Sales at a 400-person SaaS company and a student with a Gmail address who downloaded one ebook. Both came through the same funnel. Neither got the attention they deserved.

A lead scoring system fixes this by assigning numeric values to prospect traits and behaviors, so your team knows exactly who to call first. Done well, it delivers measurable results: research from industry experts puts the marketing ROI lift from effective lead scoring at 77%, and teams that implement proper scoring see 15–25% conversion rates from qualified leads to closed deals.

Done badly — and most teams do it badly — lead scoring becomes a bureaucratic point system that nobody trusts and everyone ignores. This guide walks you through building one that actually works, from ICP definition through automation and ongoing refinement.

Step 1: Define Your Ideal Customer Profile Before You Touch a Spreadsheet

The single biggest mistake revenue teams make is building a scoring model before they know who they are actually trying to score. You end up awarding points to signals that feel important but have no correlation to closed revenue.

Start by pulling your last 50 closed-won deals and looking for patterns across three dimensions:

- Firmographic fit: company size, industry vertical, revenue range, geography

- Contact fit: job title, seniority, department, decision-making authority

- Behavioral fit: what actions did these buyers take before they converted?

This analysis will surface your real Ideal Customer Profile — not the aspirational one from a whiteboard session, but the one supported by actual closed revenue. If 80% of your best customers are Directors or VPs at companies with 100–500 employees in the SaaS vertical, that becomes the backbone of your demographic scoring.

Tools like Clearbit / HubSpot Breeze Intelligence and Cognism can enrich your CRM records with firmographic and technographic data automatically, making this ICP analysis far faster than manual research. If you are running a high-volume B2B operation, the enrichment step is not optional — it is foundational.

Step 2: Build a Two-Dimensional Model — Fit Score Plus Behavior Score

Most lead scoring tutorials describe a single score. This is the wrong architecture. A lead can be a perfect demographic fit but show zero buying intent. Conversely, a lead can be highly engaged with your content but represent a company that will never become a customer. You need to measure both dimensions separately before combining them.

The Fit Score: Who They Are

Fit scoring is based on static attributes — data that does not change with time. These are the characteristics that determine whether a prospect could buy, not whether they want to. Start with five to seven criteria that predict the majority of your conversions:

- Job title / seniority (e.g., +20 for VP or Director, +10 for Manager, 0 for individual contributor in non-buying roles)

- Company size (e.g., +15 for 100–500 employees, +10 for 500–1,000, +5 for 50–99)

- Industry match (e.g., +15 for core verticals, +5 for adjacent verticals, 0 for out-of-ICP)

- Technology stack (e.g., +10 if they use complementary tools in your integration ecosystem)

Negative fit scoring is equally important. Deduct points for competitor employees, personal email addresses on a B2B form, student accounts, and companies that are too small to afford your product. These negative signals are where most systems fail — without them, you are scoring noise as signal.

The Behavior Score: What They Do

Behavioral scoring captures purchase intent. It measures actions a lead takes that indicate they are actively evaluating solutions. Prioritize high-intent behaviors over passive engagement:

- Pricing page visit: +25 points (highest intent signal short of a direct request)

- Demo or trial request: +30 points (near-MQL by itself)

- ROI calculator completion: +20 points

- Case study download: +15 points

- Webinar attendance (live): +10 points

- Blog post view (single visit): +2 points

- Email open: +1 point

- Unsubscribe from email: −15 points

The distinction between a pricing page visit (+25) and a blog read (+2) reflects a fundamental insight from the research: quality behavioral signals matter far more than volume of engagement. A lead who visits your pricing page once is more likely to convert than one who reads ten blog posts without ever showing commercial interest.

Step 3: Set Your MQL Threshold and Scoring Scale

Once you have your scoring criteria, you need to decide what number makes someone a Marketing Qualified Lead (MQL). The research from practitioners who have run these systems at scale points to a consistent benchmark: set your MQL threshold to capture the top 20% of leads by score.

On a 100-point scale, this typically translates to a threshold between 50 and 75 points. Here is what that looks like in practice across different lead quality tiers:

Newsletter

Get the latest SaaS reviews in your inbox

By subscribing, you agree to receive email updates. Unsubscribe any time. Privacy policy.

| Score Range | Lead Status | Recommended Action | Typical Conversion Rate |

|---|---|---|---|

| 75–100 | Hot / Sales Ready | Immediate sales outreach (within 1 hour) | 20–25% |

| 50–74 | MQL — Warm | Sales-assisted nurture sequence | 10–15% |

| 25–49 | Marketing Nurture | Automated email sequences, retargeting | 3–7% |

| 0–24 | Cold / Unqualified | Low-frequency nurture or disqualify | Under 2% |

| Negative | Disqualified | Remove from active pipeline | Near 0% |

The conversion rate column here is not aspirational — it reflects what organizations with mature scoring systems actually report. If your numbers are significantly lower, the problem is almost always that your MQL threshold is too low (you are passing too many unqualified leads to sales) or your negative scoring is too weak.

Tools like HubSpot Marketing Hub allow you to automate lead handoff workflows triggered by score thresholds, so a lead crossing 75 points can automatically create a task in your CRM and notify the assigned rep — no manual review required.

Step 4: Implement Score Decay to Keep Your Pipeline Current

Here is a scenario that breaks most lead scoring systems: a prospect scored 72 points eight months ago after a flurry of activity, then went completely silent. They are still sitting at 72 points in your CRM while a fresher lead at 55 points is actively revisiting your site every week. Which one does your system surface?

Without score decay, you surface the stale 72-point lead. This is a genuine problem in high-volume pipelines.

The fix is time-based decay: reduce behavioral scores by a fixed percentage each month where no qualifying activity occurs. The benchmark used by practitioners who have refined these systems is 25% monthly decay on behavioral points without new activity. Demographic/fit scores do not decay — a person's job title does not expire — but intent signals absolutely do.

Implementing Decay in Practice

In most marketing automation platforms, you implement decay through a scheduled workflow that runs monthly and reduces behavioral score fields by a multiplier. In HubSpot, this is done with calculated properties or workflow-based score adjustments. In Salesforce-based systems, it is typically handled through a scheduled Apex job or a third-party scoring tool.

The practical outcome: a lead who scored 60 behavioral points and has been silent for three months drops to roughly 25 points. If they re-engage — return to the pricing page, watch a product video — their score climbs back up, this time with more confidence that the activity is genuine buying intent rather than a onetime curiosity visit.

Step 5: Choose the Right Tools to Automate and Scale Your System

A lead scoring system built in a spreadsheet is not a scoring system — it is a scoring experiment. For anything beyond the earliest stage of testing, you need tooling that can ingest behavioral signals in real time, apply your rules automatically, and sync scores to your CRM without manual intervention.

Data Enrichment Layer

Your fit scoring is only as accurate as the data behind it. If contacts in your CRM are missing company size, industry, or job seniority — and most are, when leads self-report — your demographic scores are unreliable. ZoomInfo and Cognism both provide enrichment APIs that can fill these gaps at the point of form submission or through bulk CRM enrichment runs. ZoomInfo's database covers over 100 million business contacts; Cognism offers particularly strong coverage for European markets with phone-verified mobile numbers.

For teams already in the HubSpot ecosystem, Clearbit / HubSpot Breeze Intelligence integrates natively and enriches records without leaving the platform.

Intent and Prospecting Layer

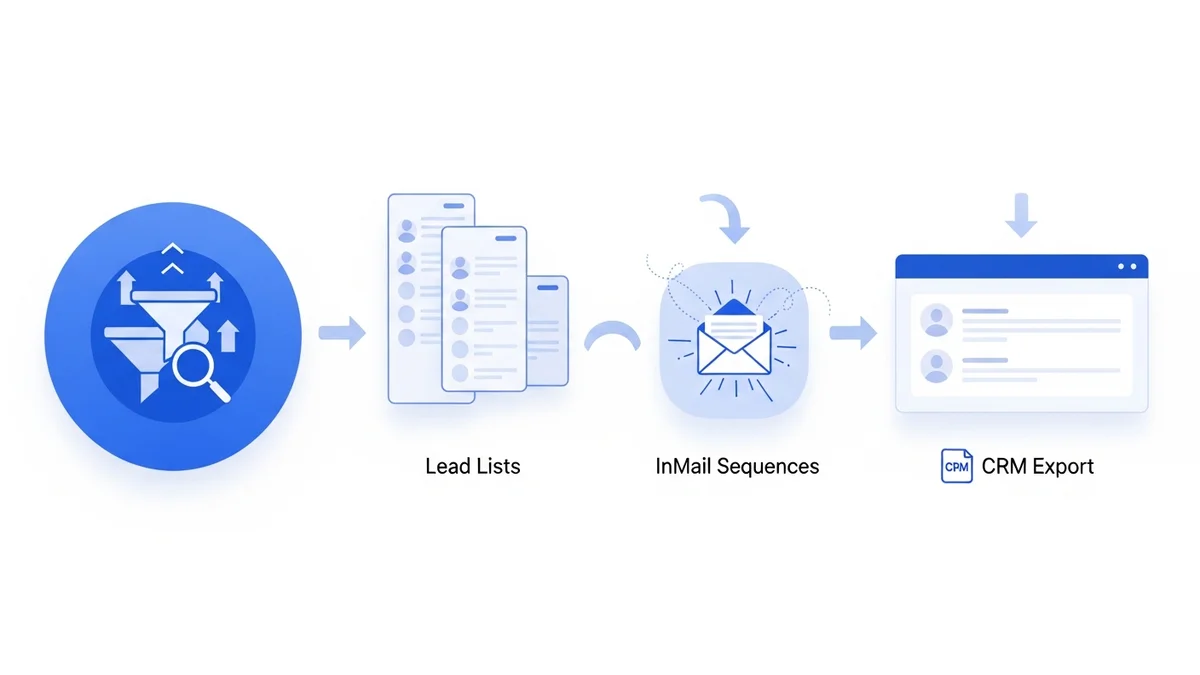

Beyond form submissions, tools like Apollo.io surface buying intent signals from third-party sources — indicating when a company is actively researching topics relevant to your product. This kind of intent data can trigger a score bump even before a prospect has visited your website, giving sales a meaningful head start.

Conversion and Landing Page Layer

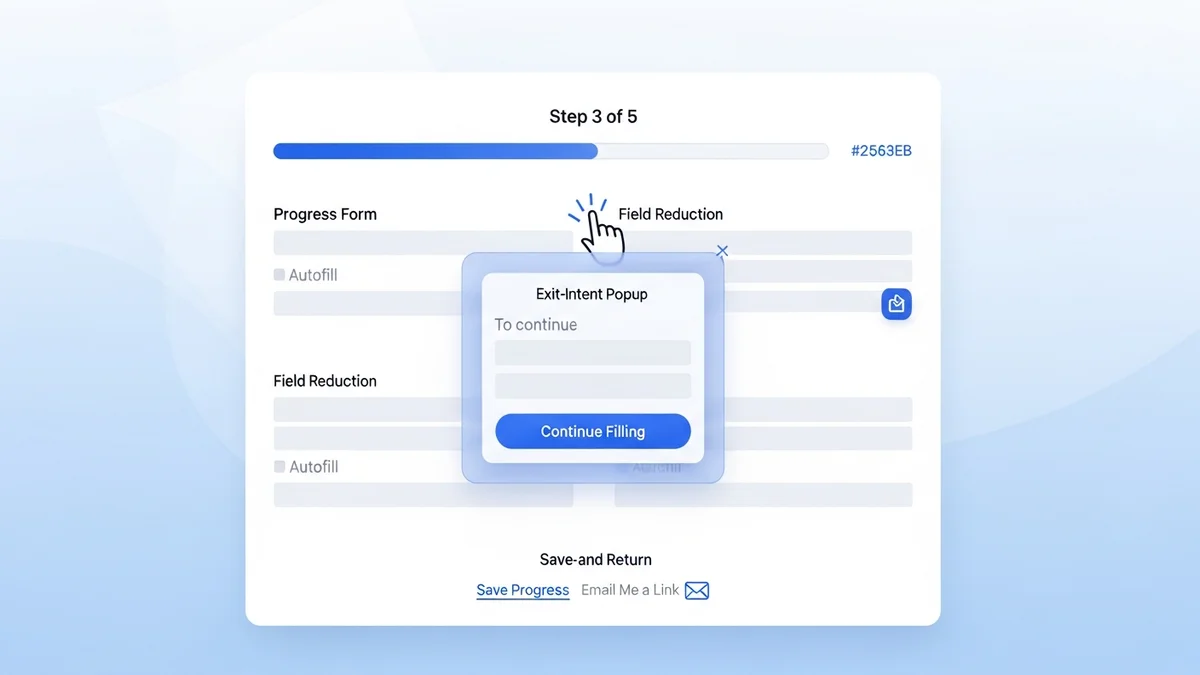

Your behavioral signals are only as good as the quality of the touchpoints generating them. A high-converting landing page is not just good for volume — it captures better-qualified leads who self-select into specific offers. Tools like Unbounce and Leadpages let you build dedicated pages for high-intent offers (demo requests, ROI calculators, pricing guides) that generate the behavioral signals your scoring model values most.

Step 6: Align Sales and Marketing on the Rules Before You Launch

The most technically sophisticated lead scoring system will fail if sales does not trust the scores it produces. And sales will not trust scores they had no input in creating.

Before you launch your scoring model, hold a joint session with sales and marketing to agree on three things:

- What an MQL actually means. If sales believes an MQL should already have expressed explicit buying intent, but marketing is passing anyone above 50 points, you have a definition problem that no amount of scoring will fix.

- What behaviors sales finds predictive. Sales reps who work deals every day often know which early signals correlate with a close. Their pattern recognition is data you should be encoding into your scoring rules.

- How scores will be surfaced in the CRM. A score that is buried in a custom field nobody checks is useless. Agree on where scores appear in your sales workflow and how they trigger actions.

Research consistently shows this alignment is not a soft, optional step. Organizations that achieve solid sales-marketing alignment on lead definitions report significantly better pipeline quality and shorter sales cycles. The scoring model is the mechanism; the agreement on what good looks like is the foundation.

How to Validate and Continuously Improve Your Scoring Model

Your first version of a lead scoring model will be wrong. This is not a flaw — it is an expected property of any model built on limited historical data and human assumptions. The teams that extract lasting value from lead scoring are the ones who treat their model as a living system and review it on a structured cadence.

Quarterly Model Reviews

Every 90 days, pull a report comparing score at MQL handoff to deal outcome. You are looking for two patterns:

- High scores that did not convert: these indicate you are awarding too many points for signals that do not actually predict purchase. Reduce those point values.

- Low scores that did convert: these are missed signals — behaviors or attributes that predicted a close but that your model is under-weighting. Increase those values.

AI-Augmented Scoring

Once you have six to twelve months of scored lead data with deal outcomes, you have enough training data for predictive modeling. AI-driven scoring goes beyond rule-based point assignment — it identifies non-obvious correlations in your data that human analysis would miss. For example, it might surface that leads who visit your integration documentation page within 48 hours of a demo request close at twice the rate of those who do not — a signal you would never think to manually encode.

Platforms like HubSpot and Monday CRM offer AI scoring features that learn from your historical deal data and continuously adjust weights. The catch: these systems need clean, consistent data to perform well. The manual work of defining your ICP and cleaning your CRM is not replaceable by AI — it is a prerequisite for AI scoring to function.

For teams handling large lead volumes across multiple channels, investing in this automation layer is not a luxury. Research from professional services firms found that 23–31% of business development time is wasted on leads that will never close. At $300–$1,000 per hour for senior team members, the cost of a poorly calibrated scoring model is not abstract — it shows up directly in your pipeline economics.

Build the model deliberately, align your teams around it, automate where the data supports it, and review it quarterly. That is the actual system — not the spreadsheet, not the point values, but the ongoing discipline of connecting scoring to revenue outcomes and adjusting accordingly.